TrustRespond.ai

Building TrustRespond.ai: automating B2B vendor security questionnaires in about 12 seconds.

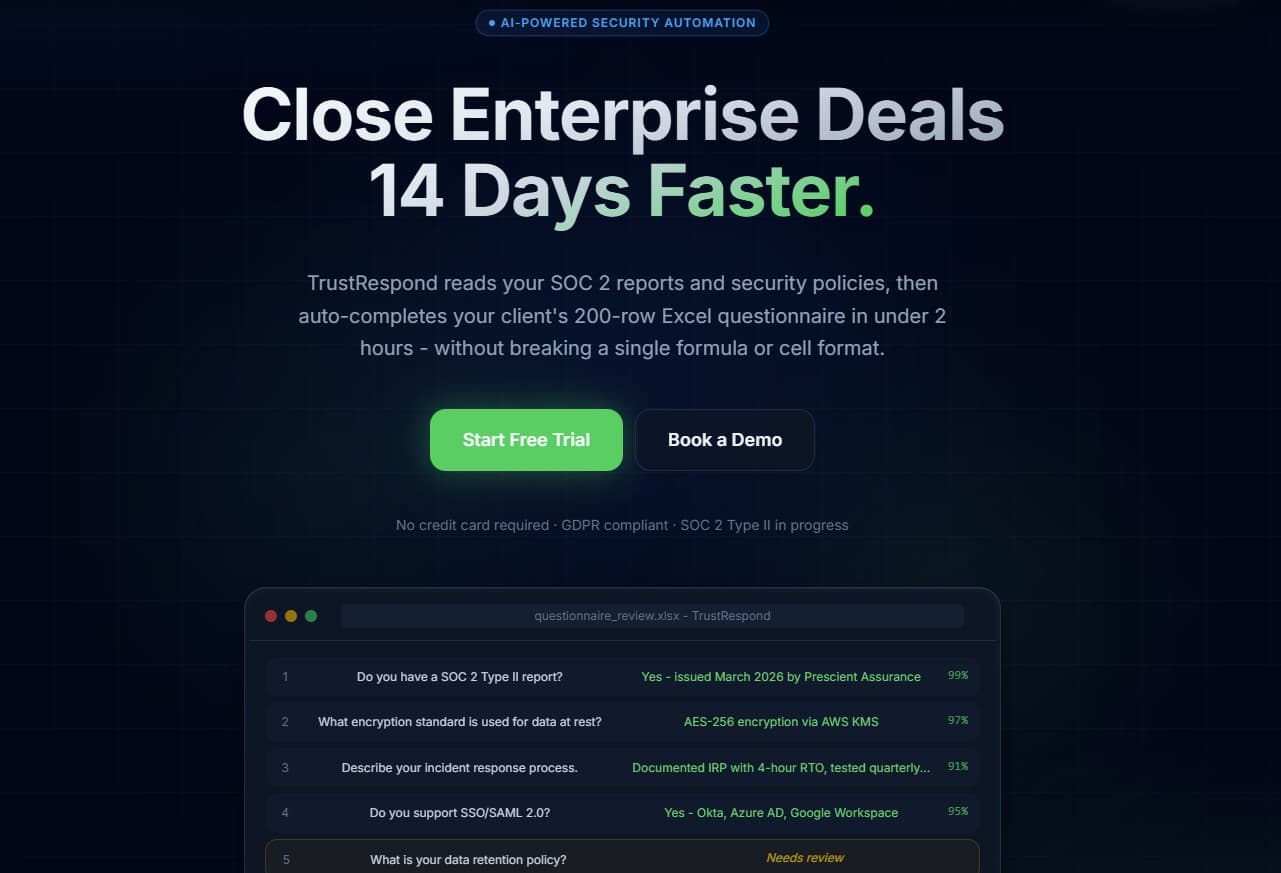

The enterprise compliance bottleneck

Every B2B SaaS company closing enterprise deals faces vendor security questionnaires: huge Excel files from IT asking granular questions about data security, SOC 2, and policies. Manually mapping internal documents into those sheets takes weeks, formatting breaks, context is lost, and sales cycles slip.

The solution

TrustRespond.ai turns that multi-week bottleneck into an automated workflow. It securely ingests compliance PDFs into a vector database, reads the client's blank Excel questionnaire, maps questions with an advanced RAG pipeline powered by Gemini, and outputs a fully populated spreadsheet—preserving the original layout.

The platform is built for enterprise-grade security and scale: tenant-isolated data, server-side orchestration, and Stripe-backed tiers so usage and quotas stay authoritative in the database—not in fragile client state.

Architecture & data flow

Documents are chunked and embedded into pgvector; questionnaire generation runs through the app layer with Gemini via the Vercel AI SDK. Stripe webhooks keep subscription state and org quotas in sync with Postgres.

Technical stack

- Framework: Next.js 15 (App Router) for a server-first architecture and cohesive API routes.

- Database & auth: Supabase (PostgreSQL) with authentication, Row Level Security (RLS), and durable app state.

- Vector search: pgvector for storing and querying document embeddings at scale.

- AI: Google Gemini 2.5 Flash via the Vercel AI SDK—fast responses, large context, reliable structured JSON for mapping and Excel output.

- Monetization: Stripe Checkout and webhooks for tier management and quota allocation per organization.

- UI: Tailwind CSS with a custom enterprise look—glass surfaces, deep navy surfaces, emerald accents.

Key engineering challenges

PostgreSQL statement limits during ingestion

Issue: Large PDFs (100+ pages) produce many embedding rows. A single bulk insert of hundreds of vectors could hit Postgres/Supabase complexity limits and fail with errors such as statement_too_complex (54001).

Fix: I built a batching utility that splits inserts into smaller chunks (for example ~50 rows per batch), processes them sequentially, and keeps memory and database load predictable for arbitrarily large documents.

RLS recursion on vector reads

Issue: Questionnaire generation queried vectors through an RPC while RLS policies referenced helpers like current_org_id(), which could recurse deeply enough to exceed stack limits during complex reads.

Fix: I isolated the internal RAG retrieval path: server-side reads use the service role with carefully scoped queries so generation stays fast and recursion-free, while tenant isolation remains enforced at the API boundary.

Stripe webhooks as the source of truth

Issue: Trusting client-side state for billing and limits is a security risk; upgrades must be reflected instantly and authoritatively in the database.

Fix: I implemented a robust org_questionnaire_usage table: a Stripe webhook listener on the Next.js server validates the payload signature and uses a secure RPC to unlock Enterprise limits for the correct org_id instantly.

User experience

The UI is intentionally premium: a dark-glass enterprise aesthetic, polished drag-and-drop upload zones, and analytics surfaces (including dry-run style mapping views) that feel like a high-end B2B tool—not a toy demo.

Result

The system takes a chaotic 200-row Excel questionnaire and fills it with answers grounded in private, securely embedded documents. Processing lands around 12 seconds per run, replacing tens of hours of manual work per client and proving that heavy compliance workflows can be automated with the right architecture.