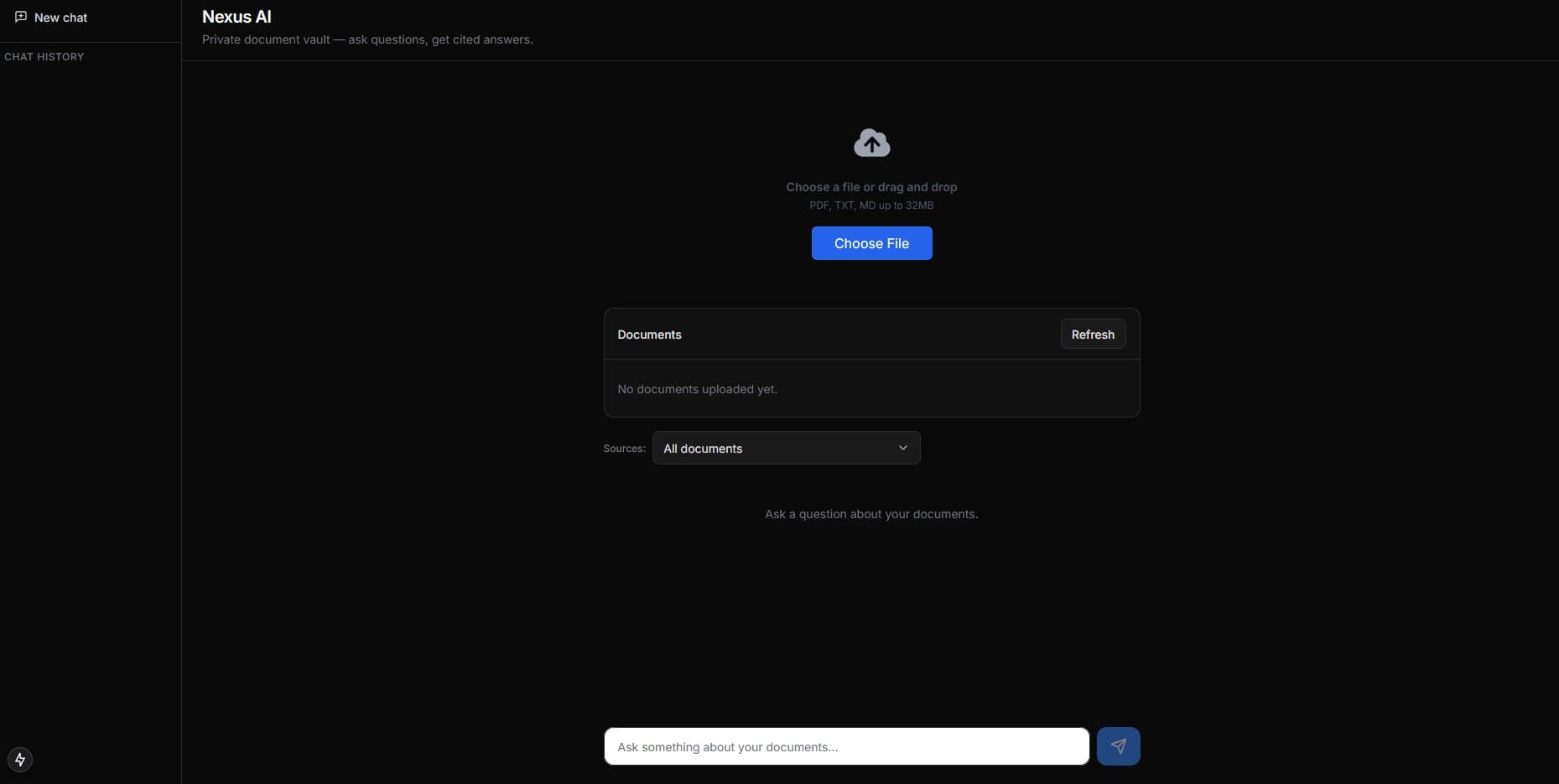

Nexus AI

Private document vault: upload files and get instant, cited answers. RAG-powered chat with hybrid search and conversation memory.

A privacy-first alternative to general-purpose AI tools for sensitive internal documents.

Nexus AI is a private document vault that turns internal files into a citation-first knowledge experience. Users upload PDFs and office documents, then chat against the vault with streaming answers and explicit source badges. It can run as a public demo or enforce sign-in and user isolation when auth is enabled. Under the hood: an ingestion pipeline (extract → chunk → embed → store in Pinecone) plus a guarded retrieval stack (hybrid vectors, keyword fallback, optional reranking) and observability (audit logs and usage events).

Architecture

Features

- Citation-first chat — streaming answers with explicit source badges like [Source: file.pdf, Page N] rendered as source chips.

- Guarded hybrid retrieval — dense vectors (Gemini embeddings) with optional sparse recall and a keyword fallback when vector confidence is low; optional Cohere rerank when enabled.

- Conversation memory — condenses the last 4 messages into a standalone query before retrieval so follow-ups keep context.

- Ingestion with lifecycle tracking — Document rows move PROCESSING → COMPLETE/FAILED with chunksCount; supports PDF/TXT/MD/DOCX/XLSX extraction.

- Enterprise controls — optional auth + multi-tenancy, RBAC (VIEWER restrictions), rate limiting, audit logs, usage events, and public API keys.

Enterprise requirements (checklist)

- Data privacy: documents aren’t used to train public models; tenant data stays isolated.

- Source attribution: every answer includes the originating document (and page when available).

- Authentication + access control: optional sign-in, role restrictions (Admin/Editor/Viewer).

- Auditability: audit logs capture who queried what and when; usage events support cost monitoring.

- Integration: API keys for programmatic query/ingest via public endpoints.

Security & operations

- Multi-tenancy: Pinecone namespaces and DB scoping prevent cross-tenant retrieval.

- Guardrails: rate limiting to control abuse/spend; keyword fallback for exact-term recall.

- Deployment-ready: Vercel-friendly setup plus Docker + CI checks for self-hosted workflows.

- Observability: structured logs, tracing hooks, and persisted usage events for tuning and budgets.

Tech stack

- Next.js (App Router), TypeScript, Tailwind, Vercel AI SDK — UI + streaming chat

- Gemini (gemini-2.5-flash + Vision), text-embedding-004 (768d) — chat, condensation, embeddings

- Pinecone — vector store (dense + optional sparse); optional Cohere rerank

- Prisma + PostgreSQL — users, documents, chat history, audit logs, usage events

Document ingestion pipeline

- Create a Document row (PROCESSING), then ingest from a file URL; mark COMPLETE (chunksCount) or FAILED.

- Extract content by type: PDF (pdf-parse + optional Vision summary for smaller PDFs), DOCX (Mammoth), XLSX (sheet → CSV text), TXT/MD (UTF-8).

- Chunking: fixed (1000 chars / 200 overlap) or semantic sentence-aware chunking via RAG_CHUNKING=semantic.

- Embed with Gemini text-embedding-004 (768 dims) and upsert to Pinecone with rich metadata (fileName, documentId, optional userId, snippet).